Self-learning AI systems represent a significant technological inflection point where algorithms can autonomously retrain, adapt, and optimize themselves without human intervention. This shift from static models to continuously improving systems marks a fundamental transformation in how artificial intelligence operates and evolves in our digital landscape.

Key Highlights

Here are the main takeaways from the research:

- Self-learning AI systems have shown remarkable improvements with benchmark increases of up to 67.3% through autonomous optimization.

- MIT’s SEAL project demonstrates how AI can generate solutions, evaluate them, and refine approaches independently.

- The cost of producing GPT-3.5 level systems has decreased 280-fold through self-improvement mechanisms.

- Current governance frameworks are significantly lagging behind the rapid pace of technical capabilities development.

- Smaller self-improving models can now outperform larger static models through continuous refinement processes.

The New Frontier of Self-Learning AI

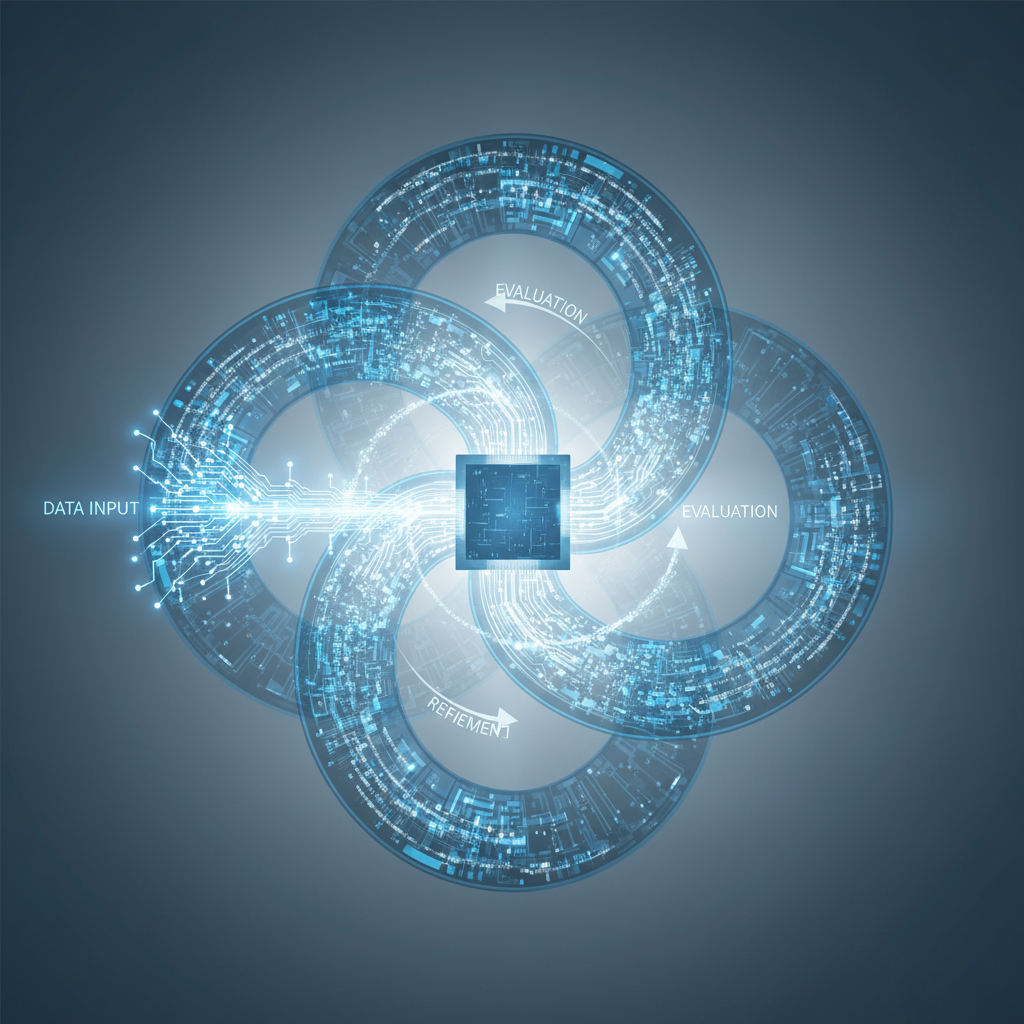

Self-learning AI represents a fundamental departure from traditional artificial general intelligence approaches that require human oversight for improvement. Unlike conventional systems that remain static after deployment, these new models can continuously self-optimize through recursive learning mechanisms. The technology employs feedback loops where the system evaluates its own performance, identifies weaknesses, and implements improvements without external direction.

Core Technical Mechanisms

The foundation of self-learning AI lies in advanced architectural frameworks that enable continuous adaptation. MIT’s Self-Evolving Artificial Learning (SEAL) project demonstrates how chatbots can generate multiple solution approaches, evaluate their effectiveness against benchmarks, and refine their methodologies independently. This process of recursive self-aggregation allows the system to compile insights from multiple iterations and integrate them into increasingly sophisticated versions of itself. The progression resembles human learning but occurs at dramatically accelerated rates without requiring rest periods or direct instruction.

Quantifying the Acceleration

The pace of improvement in self-learning systems has shown impressive benchmark gains across multiple domains. Performance improvements of 18.8%, 48.9%, and even 67.3% have been documented in systems utilizing these autonomous optimization approaches. Perhaps more significantly, the economic efficiency has transformed dramatically, with a 280-fold decrease in costs for producing capabilities equivalent to GPT-3.5 level systems. This acceleration challenges our traditional understanding of AI development timelines and resource requirements.

From Static to Dynamic Systems

Traditional AI development follows a predictable pattern where improvements come through human-driven retraining and new architectural designs. In contrast, self-learning systems create a fundamentally different growth trajectory. These systems can operate autonomously to identify optimization opportunities and implement solutions without waiting for the next human-directed development cycle. This continuous improvement process means capabilities grow more organically and potentially at exponential rather than linear rates.

Real-World Applications of Self-Improving AI

Self-improving systems are already demonstrating practical value across multiple sectors. In healthcare, diagnostic tools that continuously refine their accuracy based on new data are achieving specialist-level performance in identifying conditions from medical imaging. Industrial automation platforms employing wiz AI technologies can now adapt to changing production variables in real-time, reducing waste and optimizing resource utilization without engineer intervention.

Democratizing Advanced Capabilities

One of the most significant impacts of self-learning AI is the democratization of advanced capabilities. Tools like Quillbot’s writing assistance platforms can now evolve to match specific user needs through continuous learning from interaction patterns. Financial services have implemented self-improving risk assessment systems that adapt to emerging market conditions and novel fraudulent behaviors without requiring manual updates. These applications represent a shift toward more responsive and adaptable AI solutions that can serve diverse needs while continuing to improve their performance over time.

The Safety and Governance Challenge

Despite technical advancements, governance frameworks for self-learning AI remain fragmented and underdeveloped. Current approaches to building trust in AI systems have not kept pace with the autonomous nature of these new technologies. Significant gaps exist in controllability mechanisms, transparency tools, and alignment methodologies suitable for systems that can modify their own functionality. The risk landscape changes substantially when systems can evolve in ways their original designers may not have anticipated.

The Preparedness Gap

Our organizational and regulatory infrastructure demonstrates concerning readiness gaps for managing self-improving AI systems. Many corporate governance structures remain designed for static AI deployments rather than continuously evolving systems. Regulatory harmonization between regions is minimal, creating potential for regulatory arbitrage where development might concentrate in areas with less oversight. Building safe deployment ecosystems requires new technical standards, monitoring approaches, and intervention capabilities that currently remain at early development stages.

Building a Responsible Path Forward

The acceleration in self-learning capabilities demands correspondingly advanced governance approaches. Technical safeguards must evolve to include robust monitoring systems capable of tracking changes in model behavior over time. Organizations developing these systems need comprehensive risk assessment frameworks specifically designed for continuously improving systems rather than point-in-time evaluations. The industry would benefit from standardized benchmarks for measuring safety across different types of self-learning implementations.

Collaborative Solutions

Addressing the governance challenge requires multi-stakeholder collaboration between technical experts, policy makers, and implementation specialists. OpenAI and other leading organizations have begun developing technical controls for limiting unauthorized self-modification while preserving beneficial adaptation capabilities. Creating a balanced approach requires democratizing access to development tools while implementing graduated deployment protocols based on potential impact. Academic institutions, industry leaders, and government agencies must coordinate efforts to establish clear guardrails that enable innovation while managing risks effectively.

The emergence of self-learning AI creates both remarkable opportunities and significant responsibilities for how we design, deploy, and govern these systems. As capabilities continue to advance at an accelerating pace, our technical, organizational, and regulatory frameworks must evolve to ensure these powerful tools remain aligned with human values and needs. Finding this balance will determine whether the shift to autonomous learning systems ultimately enhances human flourishing or introduces new challenges to our technological landscape.

Sources

OpenAI Blog

Stanford HAI

Google DeepMind

World Economic Forum

MIT Technology Review